CS Researchers Present Findings on Machine Learning, Algorithms

January 14, 2020

Harvey Mudd College computer science professor George Montañez and his students have turned their research of theoretical machine learning, probability, statistics and search into multiple publications and presentations.

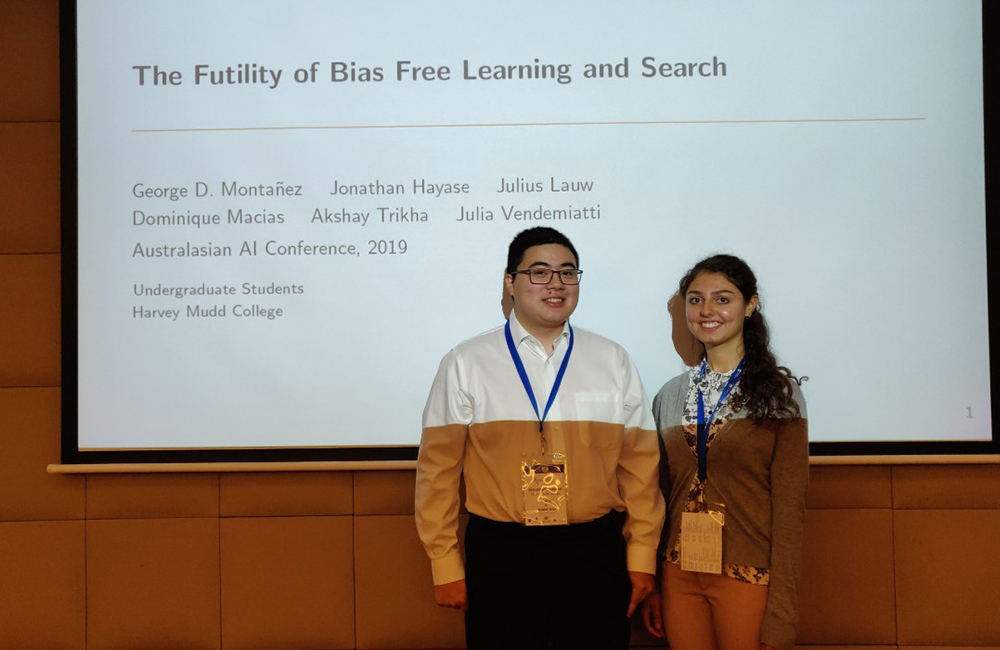

In early December 2019, Montañez, Jon Hayase ’20 and Julia Vendemiatti ’21 attended the 32nd Australasian Joint Conference on Artificial Intelligence in Australia to present their research. After winter break, Montañez and several students will present four more papers at the 12th International Conference on Agents and Artificial Intelligence (ICAART 2020) and the 11th International Conference on Bioinformatics Models, Methods and Algorithms (BIOINFORMATICS 2020), both in Malta. A sixth paper was published online by Business Horizons. Here is a list of the publications with links to each one.

32nd Australasian Joint Conference on Artificial Intelligence

“The Futility of Bias-Free Learning and Search”

George Montañez, Jon Hayase ’20, Julius Lauw ’20, Dominique Macias ’19, Akshay Trikha ’21, Julia Vendemiatti ’21

Building on the view of machine learning as search, researchers demonstrate the necessity of bias in learning, quantifying the role of bias (measured relative to a collection of possible datasets, or more generally, information resources) in increasing the probability of success.

ICAART 2020

“The Bias-Expressivity Trade-off”

Julius Lauw ’20, Dominique Macias ’19, Akshay Trikha ’21, Julia Vendemiatti ’21, George Montañez

Algorithms need bias to outperform uniform random sampling. However, bias is constraining: if an algorithm is highly biased, it tends to greatly favor some outcomes over others, regardless of what data it encounters. This limits an algorithm’s flexibility in responding to data, hindering its ability to change its behavior based on changing datasets. This research proves a set of results showing the trade-off between bias and expressivity (flexibility).

“Decomposable Probability-of-Success Metrics in Algorithmic Search”

Tyler Sam ’20, Jake Williams ’20, Abel Tadesse ’20, Huey Sun ’20 (POM), George Montañez

Prior work in machine learning has used a specific success metric, the expected per-query probability of success, to prove impossibility results within the algorithmic search framework. However, this success metric prevents us from applying these results to specific subfields of machine learning, e.g. transfer learning. We define decomposable metrics as a category of success metrics for search problems which can be expressed as a linear operation on a probability distribution to solve this issue. Using an arbitrary decomposable metric to measure the success of a search, we demonstrate theorems which bound success in various ways, generalizing several existing results in the literature. This research is part of the Walter Bradley Center Computer Science Clinic project.

“The Labeling Distribution Matrix (LDM): A Tool for Estimating Machine Learning Algorithm Capacity”

Pedro Segura Sandoval ’19, Julius Lauw ’20, Daniel Bashir ’20, Kinjal Shah ’19, Sonia Sehra ’20, Dominique Macias ’19, George Montañez

Algorithm performance in supervised learning is a combination of memorization, generalization, and luck. By estimating how much information an algorithm can memorize from a dataset, we can set a lower bound on the amount of performance due to other factors such as generalization and luck. With this goal in mind, we introduce the labeling distribution matrix (LDM) as a tool for estimating the capacity of learning algorithms. The method attempts to characterize the diversity of possible outputs by an algorithm for different training datasets, using this to measure algorithm flexibility and responsiveness to data.

BIOINFORMATICS 2020

“Minimal Complexity Requirements for Proteins and Other Combinatorial Recognition Systems”

George Montañez, Laina Sanders ’21, Howard Deshong ’21

Proteins perform a variety of tasks, including binding to portions of DNA. In order to bind only to desired stretches of DNA, a protein’s physical structure must somehow encode enough information to make the relevant distinctions. This raises an interesting question: Can we use the tools of information theory to lower bound the minimum complexity of a protein needed to perform a given recognition task? The goal of this paper is to answer this question in the affirmative.

Business Horizons

“Virtue as a Framework for the Design and Use of Artificial Intelligence”

Mitchell Neubert, George Montañez

Research uses Google to create a context that demonstrates the relevance of virtue for the ethical design and use of AI. “We describe a set of ethical challenges encountered by Google and introduce virtue as a framework for ethical decision making that can be applied broadly to numerous organizations,” Montañez says. “We also examine support for virtue in ethical decision making as well as its power in attracting and retaining the employees who develop AI and the customers who use it.”